FabCon 2026 Recap: How Microsoft is turning Fabric into the control plane for trustworthy, AI‑ready data

Explore key announcements from FabCon and SQLCon 2026 through the lens of data quality and observability. Discover what they signal for data engineers, architects, and CDOs building on Microsoft Fabric.

“Building a complete AI-ready data foundation requires unifying your data estate, processing and harmonizing data so it becomes AI-ready, and curating semantic meaning to give agents contextual understanding.” — Arun Ulag, Corporate Vice President of Azure Data at Microsoft

That quote set the tone for this year’s Microsoft Fabcon 2026, signaling a strategic shift in how Microsoft positioned Microsoft Fabric not as an analytics layer, but as the control plane for an enterprise’s entire data estate. From expanded OneLake mirroring and shortcut transformations to OneLake security and Fabric IQ, Microsoft laid out its vision for an architecture where data is always live, always accessible, and always load-bearing. This makes ensuring the quality of that data no longer an operational nice‑to‑have, but a hard requirement for AI.

In this blog, we explore the key announcements through the lens of data engineers, architects, and CDOs, with a focus on scaling trustworthy pipelines, enforcing governance, and confidently enabling AI‑native workloads on Fabric and OneLake.

1. OneLake Everywhere: Zero‑copy, Zero‑ETL Meets Governance‑By‑Default

One of the biggest stories at FabCon 2026 was how aggressively Microsoft is expanding OneLake from “Fabric’s storage layer” into a cross‑platform data fabric that connects operational and analytical systems without brittle ETL.

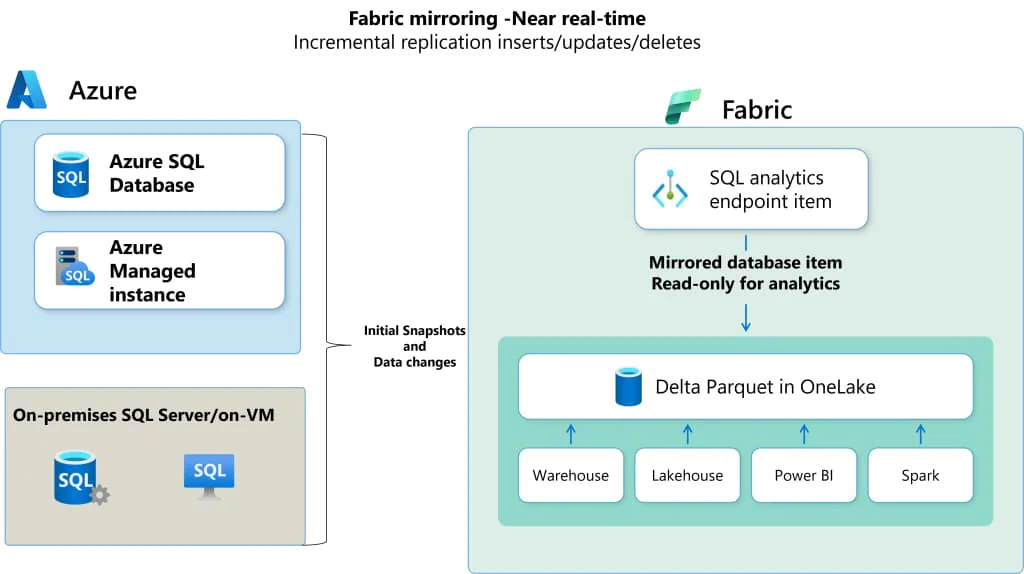

Extended Capabilities in Mirroring: More Sources, Fewer Copies

Microsoft announced new and expanded mirroring and shortcut capabilities that bring more of the enterprise data estate into OneLake with minimal movement:

- Oracle and SAP Datasphere mirroring are now GA, pushing OneLake further into core enterprise systems of record

- New mirroring endpoints (SharePoint lists, Dremio, Azure Monitor) extend visibility into operational, SaaS, and observability data sources

- Mirrored data can now be queried via views and change data feed, turning mirrors into true analytical and AI‑ready assets instead of passive replicas

For data leaders, this is not just about convenience. It’s about collapsing the distance between where data is born and where AI models consume it without exploding your pipeline surface area.

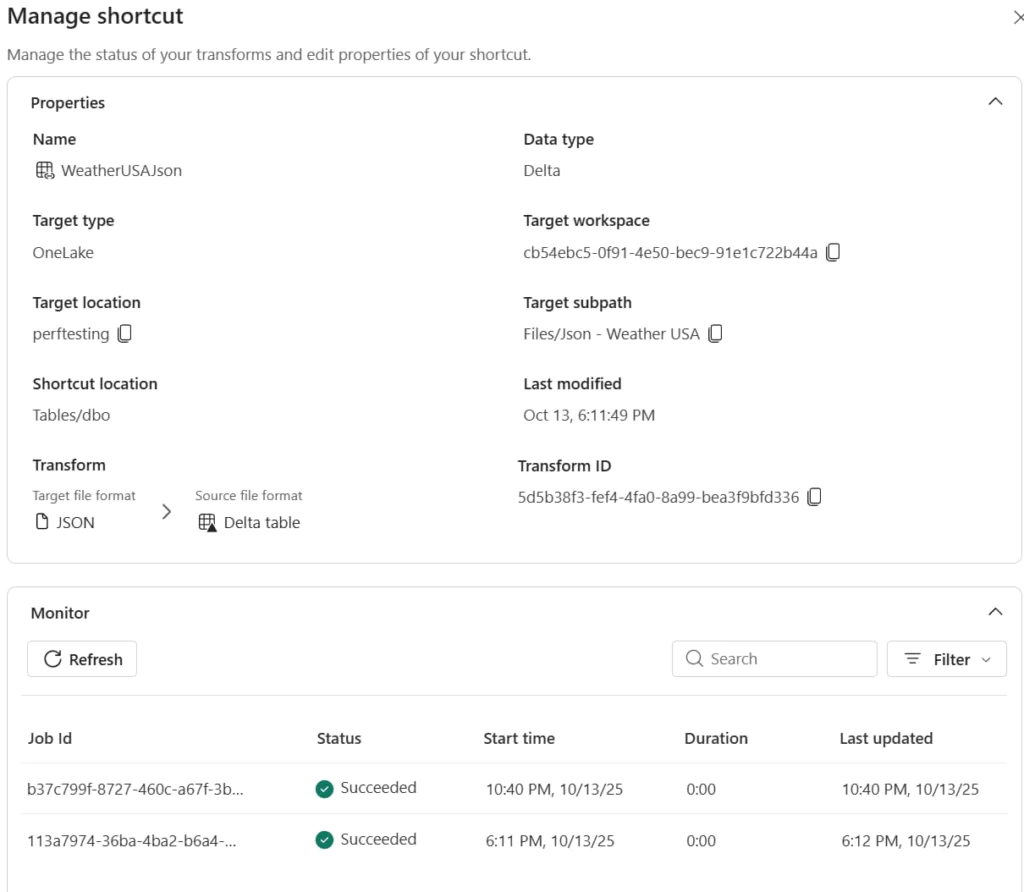

Shortcut Transformations: Data Arrives in OneLake Already Structured, Governed, And Ready To Use

Shortcut transformations reaching general availability may be the most underrated quality-centric announcement of the week.

Data teams can now:

- Convert incoming files and external tables into Delta tables automatically as they land in OneLake

- Apply AI‑powered transformations for tasks like summarization, translation, and document classification on the way in

- Use a new Excel‑to‑Delta transformation (Preview) to pull “shadow IT” Excel sheets into governed Lakehouse tables instead of letting them live as opaque files

In other words, structure and quality can be enforced at the ingestion point, not as a separate, fragile stage downstream. For observability, this is a natural control point to monitor schema drift, volume anomalies, and freshness before data fans out into models, reports, and agents.

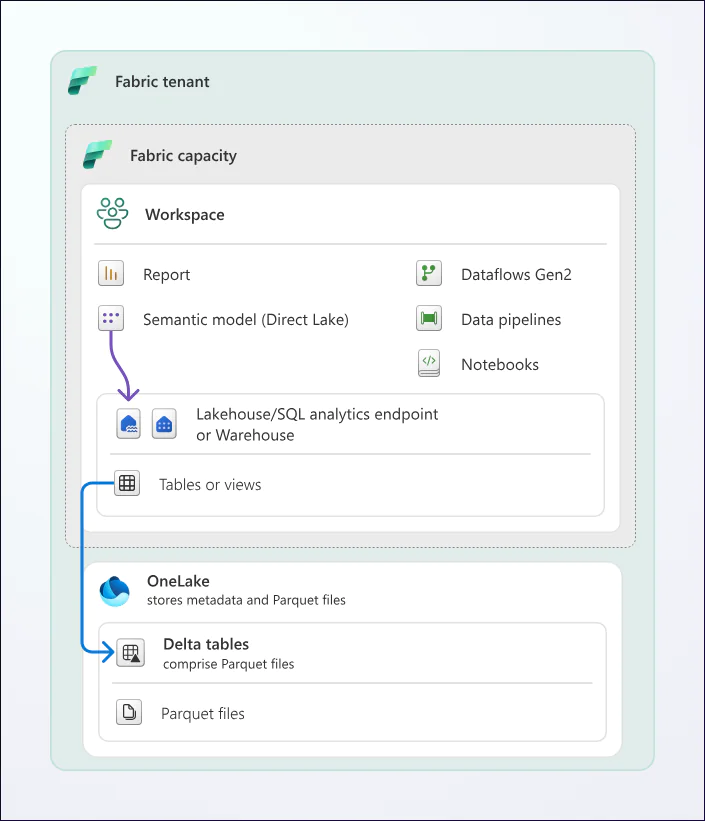

Direct Lake on OneLake: When Data Hits The Lake, It Hits Production

The general availability of Direct Lake on OneLake is the announcement with the most direct quality implication of the entire event. Power BI semantic models can now read directly from OneLake with native security enforcement, richer cross-item modeling, and import-class performance without data movement or scheduled refreshes.

In a traditional import model, a refresh cycle creates a natural buffer that catches bad data before it reaches dashboards. With Direct Lake, that buffer is gone. The moment data lands in OneLake, it is live in reports, accessible to agents, and surfaced to decision-makers. Proactive, continuous monitoring at the lake layer is now the only reliable safety net.

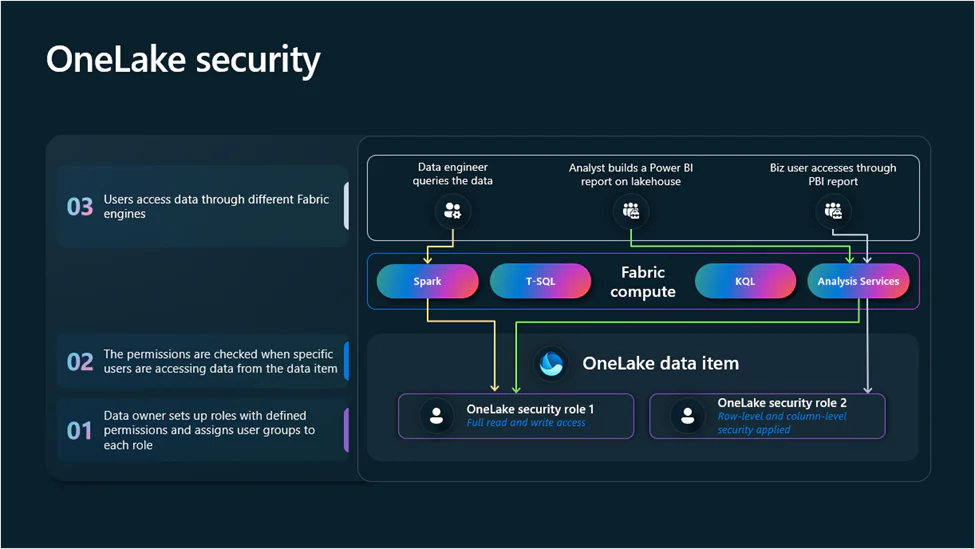

2. OneLake Security Heads Toward GA: Governance & Trust That Follows The Data

Microsoft announced that OneLake security will be generally available in the coming weeks, enabling data owners to define roles, enforce row and column-level controls, and manage permissions through a single unified model that follows the data wherever it is accessed. Here are some of the key changes :

- Security policies are defined once at the data item level and honored by all downstream consumption paths

- Eventhouse now supports this model for real‑time workloads, meaning streaming analytics can finally share the same fine‑grained controls as batch

- New APIs open the door for third‑party query engines to plug into the same security fabric

This matters for data quality because visibility and trust are tightly coupled. If you want to monitor quality across domains without accidentally exposing sensitive fields, you need a security model that’s both centralized and composable.

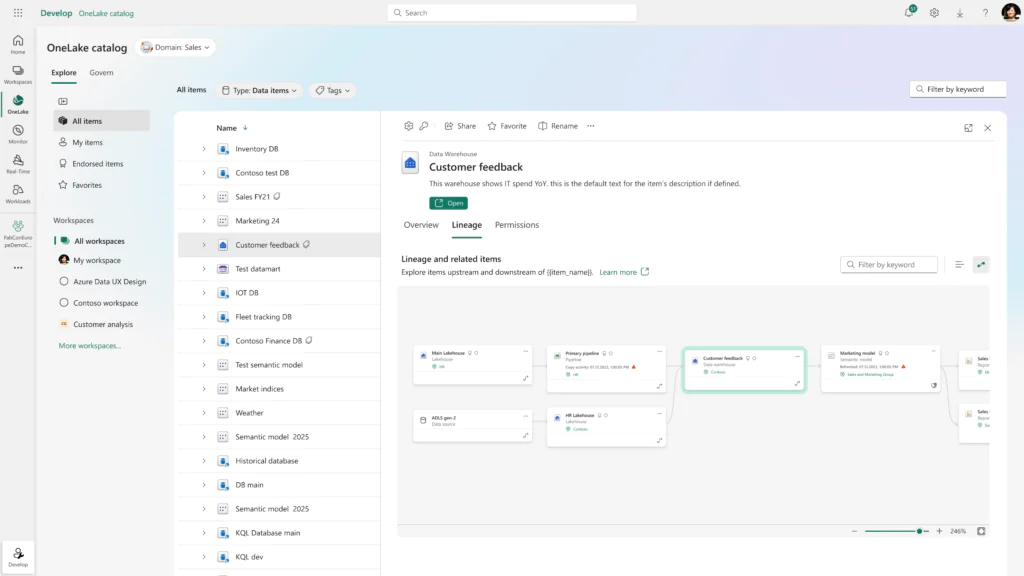

OneLake Catalog: Your Central Hub For Data Discovery, Management, and Governance

The OneLake catalog is evolving beyond “search for tables.” It’s becoming a governance and observability surface for the platform:

- A Govern tab for admins brings together insights on domains, workspace activity, protection coverage, and curation status backed by Copilot explanations

- Workspace tags and auto‑generated semantic descriptions improve discoverability for both humans and agents, surfacing context that observability platforms can leverage for impact analysis

- APIs and a native Fabric MCP server let agentic systems discover data and its metadata programmatically

As the OneLake Catalog becomes the canonical discovery surface for Fabric workloads, organizations that push quality signals back into catalog metadata will dramatically increase the value of that investment.For teams implementing data contracts, SLOs, and lineage‑driven quality programs, this is the layer where technical metadata, business context, and governance signals converge.

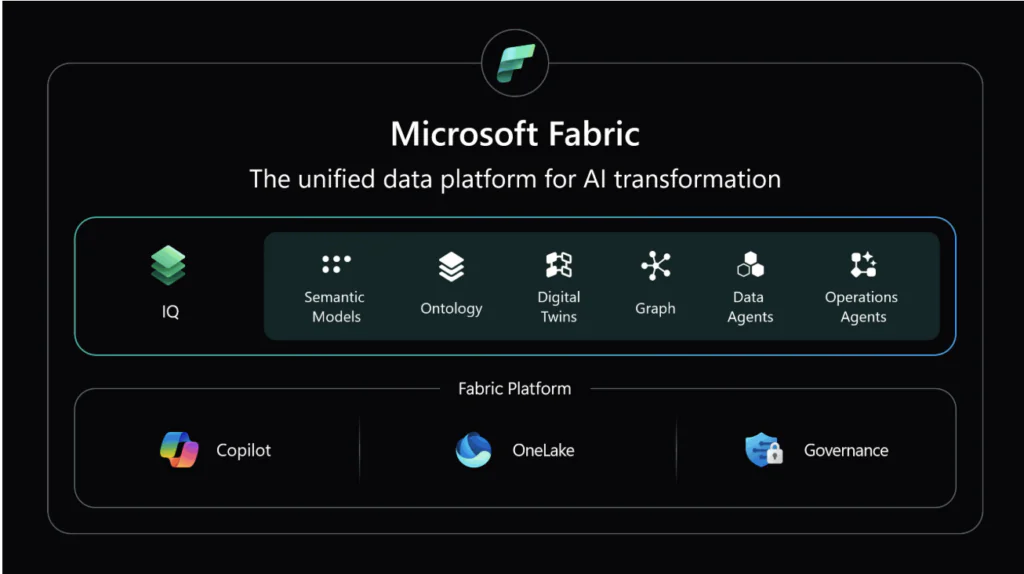

3. Fabric IQ and Fabric Data Agents: Semantics, not just schemas

AI needs semantic alignment, not just clean tables. FabCon brought Microsoft’s response in the form of Fabric IQ and Fabric data agents.

Fabric IQ: Where Raw Data Becomes Business Context For AI

The headline narrative at FabCon was Fabric IQ. introduces an ontology‑driven semantic layer that combines Power BI’s semantic model technology with Fabric’s graph to give AI agents a contextual understanding of business data. It is the connective tissue between raw OneLake data and agents that can reason about it.

Fabric IQ sits within the broader Microsoft IQ framework, alongside Work IQ (productivity signals from M365) and Foundry IQ (institutional knowledge). Together, they are designed to give every AI agent a shared, enterprise-grade business context. Graph in Fabric, which enables teams to query and visualize complex relationships across customers, partners, and supply chains, will be generally available in the coming weeks.

The implications for data quality and observability are profound:

- Quality rules can be framed in terms of business entities (“orders must ship within X days”, “a customer’s KYC status must be valid”) instead of column‑by‑column checks

- Anomalies detected at the semantic level are more meaningful—e.g., “revenue recognition logic drifted” vs “metric X changed shape.”

- Graph and geospatial support allow teams to reason about complex network and location‑driven behaviors, where naive row‑level checks fall short

With Planning in Fabric IQ (developed in partnership with Lumel) entering Preview, the planning layer becomes yet another high‑stakes consumer of this data. Misaligned semantics or low‑quality inputs don’t just skew dashboards; they reshape budgets, forecasts, and AI‑driven decisions.

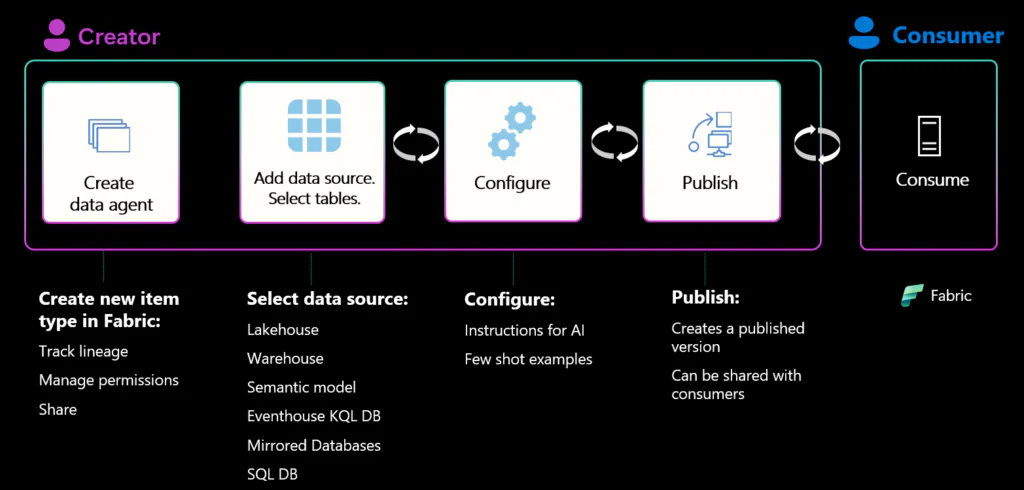

Fabric Data Agents Is Now Generally Available: Domain-Specific AI That Knows Business And Not Just Your Schema

Fabric data agents reached GA, enabling domain‑specific “virtual analysts” grounded in enterprise data. Microsoft also previewed operations agents, which monitor real‑time signals and take automated action when they detect patterns or issues.

For observability, this opens up powerful patterns:

- Data agents can proactively surface data quality issues (“this KPI’s input feed is stale”, “this column’s distribution changed after a schema update”) in the same interface decision‑makers already use.

- Operations agents can trigger corrective workflows—rerun pipelines, quarantine suspicious data, or roll back to a last‑known‑good snapshot when anomalies exceed a threshold.

- Because all of this sits on OneLake + Fabric IQ, the same semantic and security context applies across incidents and remediation.

The net effect: observability signals no longer live in their own tool silo. They can increasingly drive the behavior of agents, applications, and even physical systems.

4. Multi-engine Interoperability: one data estate, one quality standard

Multi-engine interoperability is the clearest signal that Microsoft is building for the enterprise rather than just the Microsoft-native shop. The announcements this week confirm that OneLake is positioning itself as the central coordination layer for heterogeneous data estates, not a destination that everything must migrate to. For data quality teams, that ambition creates a direct imperative: quality must be defined once at the data layer and enforced consistently wherever the data is consumed.

Open ecosystem: Snowflake, Databricks, Iceberg

Perhaps the clearest signal that Microsoft is building for the enterprise rather than just the Microsoft-native shop was the double announcement on interoperability. OneLake’s integration with Azure Databricks Unity Catalog is now in public preview, and OneLake’s interoperability with Snowflake is now generally available. Teams can natively store Snowflake-managed Iceberg tables in OneLake and automatically access Fabric data from Snowflake without copying it.

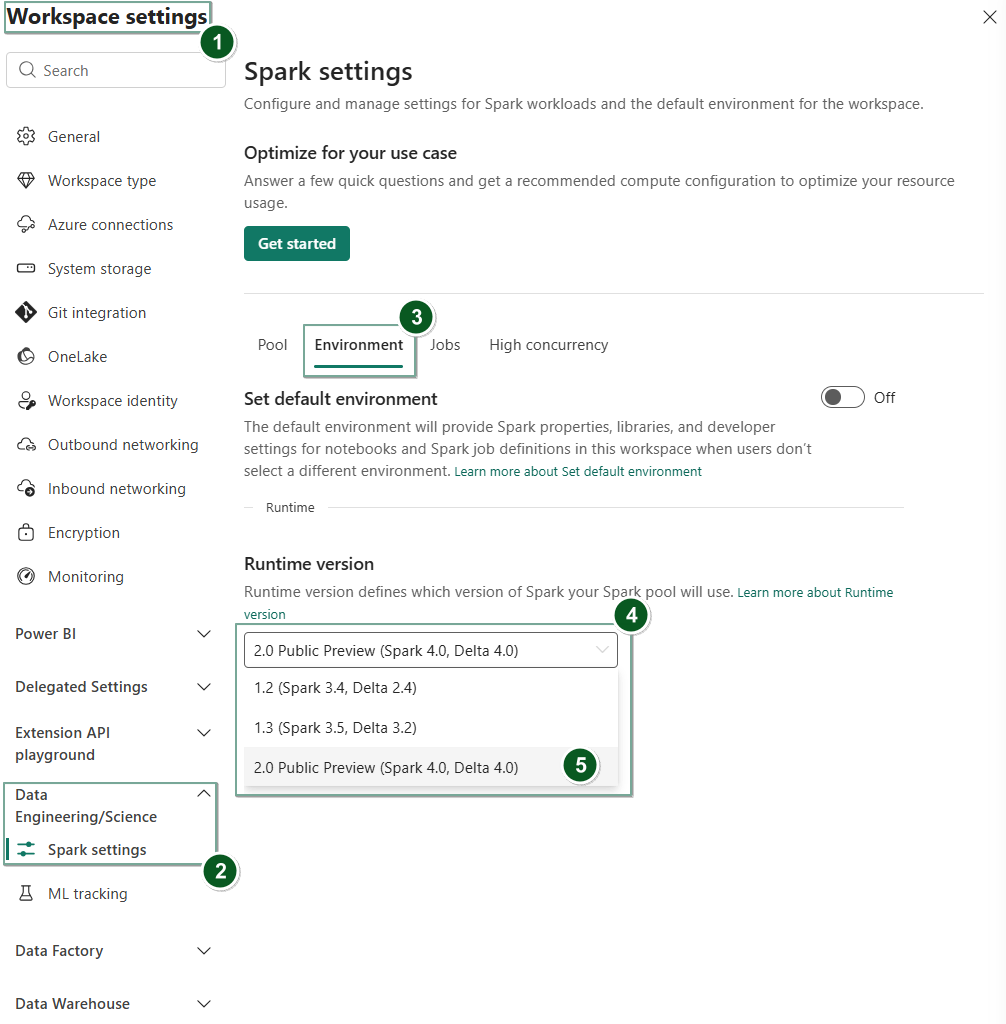

Fabric Runtime 2.0 & Migration assistants: ADF, Synapse, SQL to Fabric

With Fabric Runtime 2.0 (Preview) bringing Apache Spark 4.0, Delta Lake 4.0, and new SQL features to Fabric, teams get more flexibility to process complex data types and build sophisticated pipelines.

Microsoft also announced advanced migration assistants to help customers move from Azure Data Factory, Synapse pipelines, Synapse Spark, and Azure SQL to Fabric.

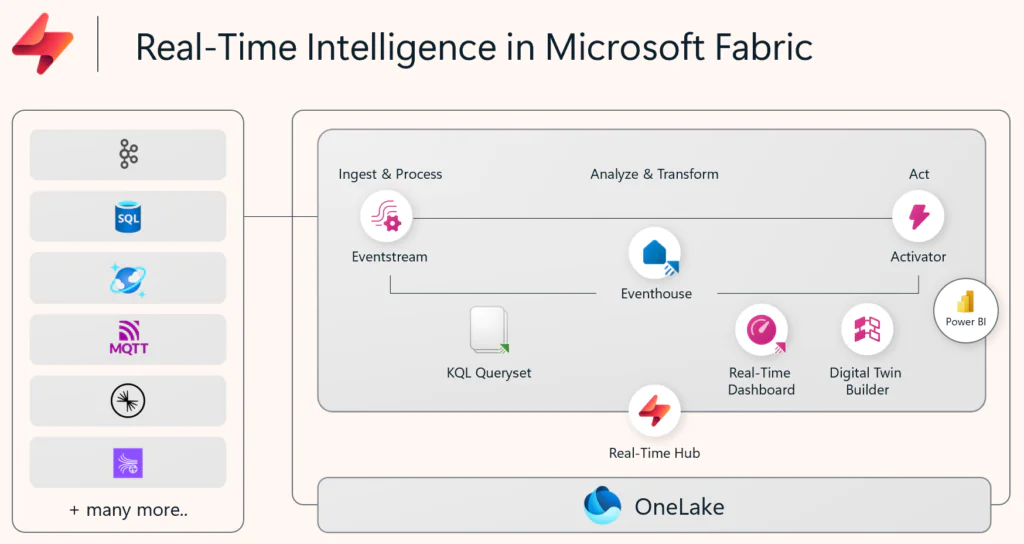

5. Real-Time Intelligence and Eventstreams Deltaflow: Every Database Change Is Now A First-Class Analytics Event

FabCon introduced two new preview capabilities in the Real-Time Intelligence workload. The first is event-driven applications triggered by database changes via Fabric Eventstreams Deltaflow. The second is real-time stream processing with Fabric Eventstreams and Spark notebooks. Together they extend Fabric’s event-driven architecture to a new class of operational data workloads.

The addition of Mapping Data Flows to Fabric Data Factory by June 2026 signals that large-scale transformation pipelines are moving fully into the Fabric platform. dbt job GitHub support, also announced at FabCon, continues the trend toward code-first, version-controlled data engineering within the Fabric ecosystem.

Final Thoughts: What This Means for Data Teams Building on Fabric

Fabcon 2026 made one thing clear. The future of enterprise data architecture is open, composable, and AI-native by design.

With innovations like Fabric IQ, Fabric data agents, OneLake security, and OneLake Catalog, MS Fabric is unifying storage, governance, and AI into a single intelligent foundation. AI-native applications now demand governed, real-time access to both transactional and analytical data, delivered through a unified control plane.

As organizations adopt Apache Iceberg, Delta Lake, manage hybrid data pipelines, and deploy AI agents on operational data, the room for error narrows. A single schema drift or freshness issue can skew predictions and erode trust in AI systems.

That’s why trust must be embedded at the data layer, not added after the fact. This is where Telmai adds critical value:

- Continuously monitor and validate Onelake data natively across every Fabric experience without moving data, adding infrastructure, or requiring manual intervention

- Trust signals push back to the OneLake Catalog, so every data product carries its quality state with it

- AI-powered incident correlation surfaces root causes before they reach downstream consumers

- With MCP-compliant trust signals, your Fabric IQ agents and operations agents can gate on data quality the same way they gate on business logic

Microsoft’s vision at FabCon was an AI-ready enterprise built on trusted data. Telmai is how Fabric teams actually get there.

Click here to talk to our team to learn how Telmai can accelerate access to trusted and reliable data in your Microsoft Fabric ecosystem.

Passionate about data quality? Get expert insights and guides delivered straight to your inbox – click here to subscribe to our newsletter now.

- On this page

See what’s possible with Telmai

Request a demo to see the full power of Telmai’s data observability tool for yourself.